Choosing the right Dify implementation vendor is an important step, but it’s not a guarantee of success. Many Dify projects still fail even with a capable partner – because the real challenge lies in how both sides collaborate throughout the implementation process.

Dify is a powerful AI low-code platform that allows businesses to build chatbots, RAG systems, and AI workflows quickly. But precisely because it’s “easy to get started,” many underestimate the gap between “the demo works” and “employees actually use it every day.”

This article is for businesses that have already selected a Dify implementation vendor and want to know: what should you be monitoring to prevent the project from falling apart midway?

※ If you’re still in the process of choosing an implementation partner: How to Choose a Dify Vendor →

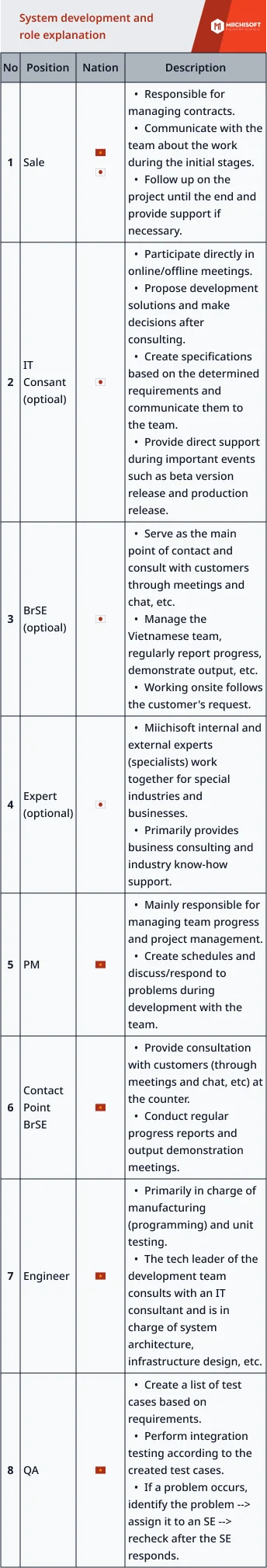

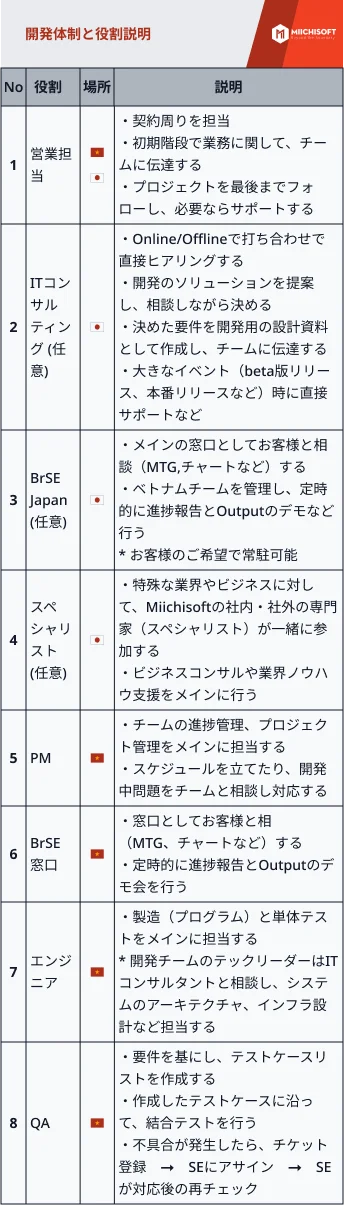

At Which Stage Do Dify Projects Tend to Fail?

Regardless of how experienced your Dify implementation vendor is, risks concentrate at predictable stages.

Before diving into specific causes, businesses need to understand the lifecycle of a Dify implementation project and where risks tend to concentrate.

Stage 1: Requirements Definition

- Both sides align on “what to build, for whom, and what problem to solve.” The biggest risk here is a vague project scope – each side interprets things differently, but no one speaks up.

Stage 2: PoC and Development

- The vendor begins building the solution. The risk lies in expectations diverging from reality – the PoC runs smoothly with sample data but doesn’t reflect the complexity of real-world application.

Stage 3: Testing and Acceptance

- The product is handed to end users for testing. The most common risk is that users don’t genuinely participate, feedback arrives late, and rework has to happen right before the deadline.

Stage 4: Operations and Maintenance

- Go-live succeeds, but after 1-2 months no one uses the system and no one maintains it. This stage has the highest failure rate yet receives the least attention – the project doesn’t “crash”; it simply fades away.

The key takeaway:

- Most Dify projects don’t fail suddenly at a single point. They gradually weaken across each stage – every phase accumulates a little more “debt” until things become irreversible. Businesses need to monitor continuously, not just review when the project is nearly finished.

7 Reasons Dify Projects Fail During Implementation

Understanding why projects fail starts with knowing what to expect from your Dify implementation vendor and what your business must bring to the table.

① Unclear requirements – the vendor makes assumptions, but they’re not quite right

Real–world scenario:

- After the kick-off meeting, the vendor asks, “What specific situations do you want the chatbot to handle?” The business responds vaguely: “Automatically answer customer questions.” The vendor interprets this on their own and starts building. At the demo, the business says: “No, we meant only product-related questions, not technical support.” Back to square one.

Root cause:

- The business hasn’t translated their “business problem” into specific enough “system requirements.” At the same time, the vendor hasn’t probed deeply enough to force the client to clarify before starting development.

Early warning signs:

- After 1-2 initial sprints, both sides are still “re-confirming requirements” instead of “reviewing the product.” The requirements document lacks specific use cases and has no sample input/output examples.

What your business should do:

- Require both sides to complete and sign off on a requirements specification document before development begins. This document must include a list of specific use cases, input/output examples for each scenario, and clear boundaries between “in scope” and “out of scope.” If your Dify implementation vendor doesn’t request a requirements specification document, treat that as a red flag.

② Failure due to lack of genuine end-user involvement

Real–world scenario:

- The IT department works with the vendor to build an internal AI chatbot. When the sales team starts using it, the feedback is: “The interface is hard to use,” “The answers don’t match our daily work context,” “The workflow doesn’t align with our actual processes.” Result: 40% of the system needs to be redesigned.

Root cause:

- A disconnect between the technical team (IT + vendor) and the business team (end users). IT understands the technology but doesn’t deeply understand daily operational workflows. The Dify implementation vendor is even less likely to grasp these nuances without direct contact with actual users.

Early warning signs:

- End users see the product for the first time at the acceptance testing stage – far too late to make adjustments. No business representatives participate in sprint review sessions.

What your business should do:

- Assign at least 1-2 representatives from the department that will use the product to participate from the requirements stage onward. At minimum, they should review and evaluate prototypes after each sprint. Don’t let end users become “outsiders” in a project built for them.

③ No clear criteria to evaluate whether the PoC succeeded or failed

Real–world scenario:

- The vendor demos a PoC chatbot – it answers questions, everyone seems satisfied, and the decision is made to move to production. In actual use, accuracy reaches only 60%, response times are slow, employees are disappointed and revert to old methods. No one defined what “PoC success” meant from the start, so no one caught the problem early enough.

Root cause:

- A reliable Dify implementation vendor should proactively define PoC success metrics before any demo is scheduled. The PoC is evaluated by gut feeling – “it works, so it’s fine” – rather than specific measurable criteria. There’s no clear line between “meets requirements” and “doesn’t meet requirements.”

Early warning signs:

- Before the PoC even begins, there’s no evaluation criteria document. The PoC review session is just a demo presentation with no scorecard or metrics.

What your business should do:

- Before starting the PoC, both sides must agree in writing: What is the target accuracy percentage? What is the maximum acceptable response time? Which specific business metrics need to improve? A PoC without measurable criteria is no different from an experiment without a conclusion.

④ Shallow prompt design – falls apart when facing real-world scenarios

Real–world scenario:

- The chatbot works fine for basic questions. But when employees ask more complex questions with specific context or edge cases, it gives wrong answers, rambles, or “makes things up” (hallucination). Users lose trust after 2-3 incorrect responses and stop using it entirely.

Root cause:

- Prompt design and workflow configuration in Dify falls into the category of “easy to do, hard to do well.” Many vendors or internal teams stop at “the demo works” without investing enough in edge case testing and prompt optimization.

Early warning signs:

- The demo looks great, but testing with real data shows error rates exceeding 30%. The vendor can’t provide a comprehensive test case matrix that includes edge case scenarios.

What your business should do:

- Require the vendor to provide a test case matrix covering both standard workflows and edge cases. Feed real data and real scenarios from the field into testing – not just sample data. Prompt tuning isn’t a one-time task; it’s a continuous, iterative process.

⑤ “It seems slightly off-scope, but it’s a small difference – just keep going”

Real–world scenario:

- Each sprint, the vendor delivers output “as requested.” But every time the business reviews it, things feel “slightly different from what we had in mind.” No one speaks up because each time the gap is small. After 3-4 sprints, the cumulative gap is enormous. In the final stage, conflict erupts: “This is not what we needed.”

Root cause:

- A lack of alignment mechanisms between both sides at a sufficiently senior level. There may be weekly meetings, but only at the project manager level. Or meetings happen but minutes aren’t recorded clearly, and each side “remembers” things differently.

Early warning signs:

- Deliverables consistently need “minor tweaks” but no one escalates to leadership. Meeting minutes are missing or lack detail. Both sides describe the same feature using different terminology.

What your business should do:

- Establish a progress review every two weeks with leadership participation – not just the project manager. Every decision must be documented in meeting minutes confirmed by both sides. When any gap is detected, no matter how small, address it immediately. Never let it accumulate.

⑥ Security issues discovered midway – the project has to stop for remediation

Real–world scenario:

- Development is nearly complete, and the acceptance testing phase is about to begin. The IT security team finally reviews the project and discovers: data is being sent through unapproved cloud services, there’s no process for handling personal information, and the system architecture doesn’t meet internal security standards. Result: the architecture must be redesigned, causing a 2-3 month delay and significant additional costs.

Root cause:

- Security review wasn’t included in the early stages of the project. The IT security team wasn’t involved from the beginning because everyone assumed “this is an AI project, not an infrastructure project.” In reality, any AI project that processes corporate data must comply with security policies.

Early warning signs:

- No security checklist has been approved before development begins. The IT security team doesn’t know which services the project is using or where data is being stored.

What your business should do:

- Include a representative from the security or IT infrastructure team in the project from the requirements definition stage. Require the vendor to provide a security design document before writing any code. Security isn’t something you check at the end – it needs to be designed in from the start.

⑦ Go-live succeeds, but no one updates or maintains the system – it “dies” within months

Real–world scenario:

- The project goes live successfully. The first month, everything runs well. In the second month, prompts need updating because of new products – no one knows how. In the third month, accuracy drops because data has changed – no one is monitoring. By the fourth month, employees stop using it. By the fifth month, the system becomes a “dead asset.”

Root cause:

- The project is treated as a “build once and done” effort instead of a system that requires ongoing operation and continuous improvement. There’s no handover plan and no training for the internal team.

Early warning signs:

- The system is about to go live but there’s no operations manual. No one has been designated as responsible for maintenance. There’s no internal training plan.

What your business should do:

- Require the Dify implementation vendor to deliver complete operations documentation and train at least 2 internal staff members on the basics: updating prompts, adding to the knowledge base, and monitoring performance.

- Clearly define who is responsible for maintenance after the system goes into production. If you need long-term vendor support during the initial period, include this clause in the contract from the very beginning.

Project Health Checklist for Working with a Dify Implementation Vendor

If more than 3 items remain unaddressed, the project is at high risk and needs immediate intervention.

Requirements Definition Stage

- Has the requirements specification been signed off by leadership?

- Have representatives from the user department been involved from the start?

- Have security requirements been confirmed with the IT security team?

- Is the project scope clearly defined between “in scope” and “out of scope”?

PoC Stage

- Have PoC success criteria been defined with specific metrics?

- Has testing been conducted with real data, not just sample data?

- Is there a formal written PoC evaluation report?

Development Stage

- Are there progress reviews every two weeks with leadership participation?

- Are all decisions documented in minutes confirmed by both sides?

- Has the vendor provided a test case matrix including edge case scenarios?

- Has the security design document been approved?

Acceptance Testing and Operations Stage

- Are end users genuinely participating in acceptance testing?

- Has the operations manual been delivered?

- Have at least 2 internal staff been trained on operations?

- Has a clear owner been designated for ongoing maintenance?

- Does the vendor offer a post-go-live support package?

How Involved Should Your Business Be When Working with an Dify Implementation Vendor?

When partnering with a Dify implementation vendor, the biggest mistake businesses make is treating the relationship as a simple outsourcing arrangement.

Be a collaborative partner, not just a “client placing an order.”

The most common mistake is handing the project to IT, who hands it to the vendor, and then everyone waits for results. AI projects are different from traditional IT projects – they require continuous involvement from the business side to refine business logic, evaluate output quality, and make decisions quickly. A vendor cannot succeed if the business simply “assigns the work and waits.”

The speed of your decisions determines the speed of the project.

AI projects need fast iteration cycles. If every small decision must go through 3 layers of internal approval, the project will slow down and lose momentum. Businesses should establish clear delegation protocols: which decisions the project manager can handle independently, which need to be escalated, and the maximum turnaround time for escalations.

Beware the “outsourcing trap.”

“We’ve hired a professional company, let them handle it” – this mindset is dangerous. Not because the vendor isn’t capable, but because the final outcome depends on two-way collaboration. Your business doesn’t need deep technical knowledge, but you do need to know: what stage is the project in, what’s being delayed, and when is intervention needed.

Review at every phase transition.

You don’t need weekly review meetings, but every time the project moves to a new stage (requirements → PoC → development → operations), your business needs to answer two questions: Is the project still on track? What risks need to be addressed before moving forward?

This is exactly the approach Miichisoft calls “AI Co-Creation” – not simply receiving requirements and delivering a product, but walking alongside the business at every stage, bringing the right people in at the right time, and ensuring your business always has the information needed to make the right decisions at the right moment.

Conclusion

Dify projects rarely fail because of poor technology or an incompetent vendor. Most failures stem from how a business and their Dify implementation vendor collaborate during implementation: rushing ahead with unclear requirements, forgetting end users, lacking PoC criteria, superficial prompt design, ignoring misalignment, catching security issues too late, and not preparing for the post-go-live phase.

The 7 causes above are the 7 points your business should continuously monitor. You don’t need to understand every line of code – but you do need to recognize when the project is showing warning signs and know where to intervene before things go too far.

Frequently Asked Questions

Q1: After choosing a Dify implementation vendor, what’s the first thing to do?

The most important first step is completing a requirements specification document with the participation of all three parties: leadership, end-user representatives, and the vendor. This document must include specific use cases with input/output examples, clear scope boundaries, PoC evaluation criteria with measurable metrics, and security requirements confirmed by the IT team. Do not begin development until this document has been formally signed off.

Q2: What should you check during the Dify PoC stage?

The PoC needs quantifiable success criteria agreed upon in advance, including: an accuracy target (e.g., ≥85%), maximum response time, and specific business metrics to improve. Most importantly, the PoC must be tested with real data from actual operations, not just sample data. The PoC evaluation session should include a formal scorecard – not just a demo presentation.

Q3: How can you detect early signs that a Dify project is at risk of failing?

There are 5 early warning signs: (1) after 2 initial sprints, both sides are still re-confirming requirements instead of reviewing the product, (2) end users haven’t seen the product until the acceptance testing stage, (3) deliverables consistently need “minor tweaks” but no one escalates, (4) the demo looks great but real-data testing shows error rates exceeding 30%, (5) go-live is approaching but there’s no operations manual or designated person responsible for maintenance.

Q4: What’s needed to ensure Dify is actually used after deployment?

Three elements are essential: (1) at least 2 internal staff trained on basic operations – updating prompts, adding to the knowledge base, and monitoring performance, (2) a complete operations manual delivered by the vendor before go-live, and (3) a post-go-live support package from the vendor for at least the first 1-3 months. If any of these elements is missing, the system faces a very high risk of becoming a “dead asset” within 3-5 months.

Q5: How do you prevent misalignment with your Dify implementation vendor?

Three core measures: (1) every decision and change must be recorded in meeting minutes confirmed by both sides, (2) establish progress reviews every two weeks with leadership participation – not just the project manager, and (3) when any misalignment is detected, no matter how small, address it immediately instead of letting it accumulate. Small gaps that build up over 3-4 sprints will erupt into major conflicts at the end of the project.

![[Dify Case Study] Dify AI Contract Review Chatbot in 2 Minutes – Standardized to Internal Compliance Requirements](https://miichisoft.com/wp-content/uploads/2026/02/difyai-2-1.jpg)

![6 Criteria for Dify Implementation Vendor Selection you must know! [Free RFP Template]](https://miichisoft.com/wp-content/uploads/2026/02/dify-vendor-jp-1.jpg)